...the Scrum Team is producing potentially shippable increments every Sprint.

✥ ✥ ✥

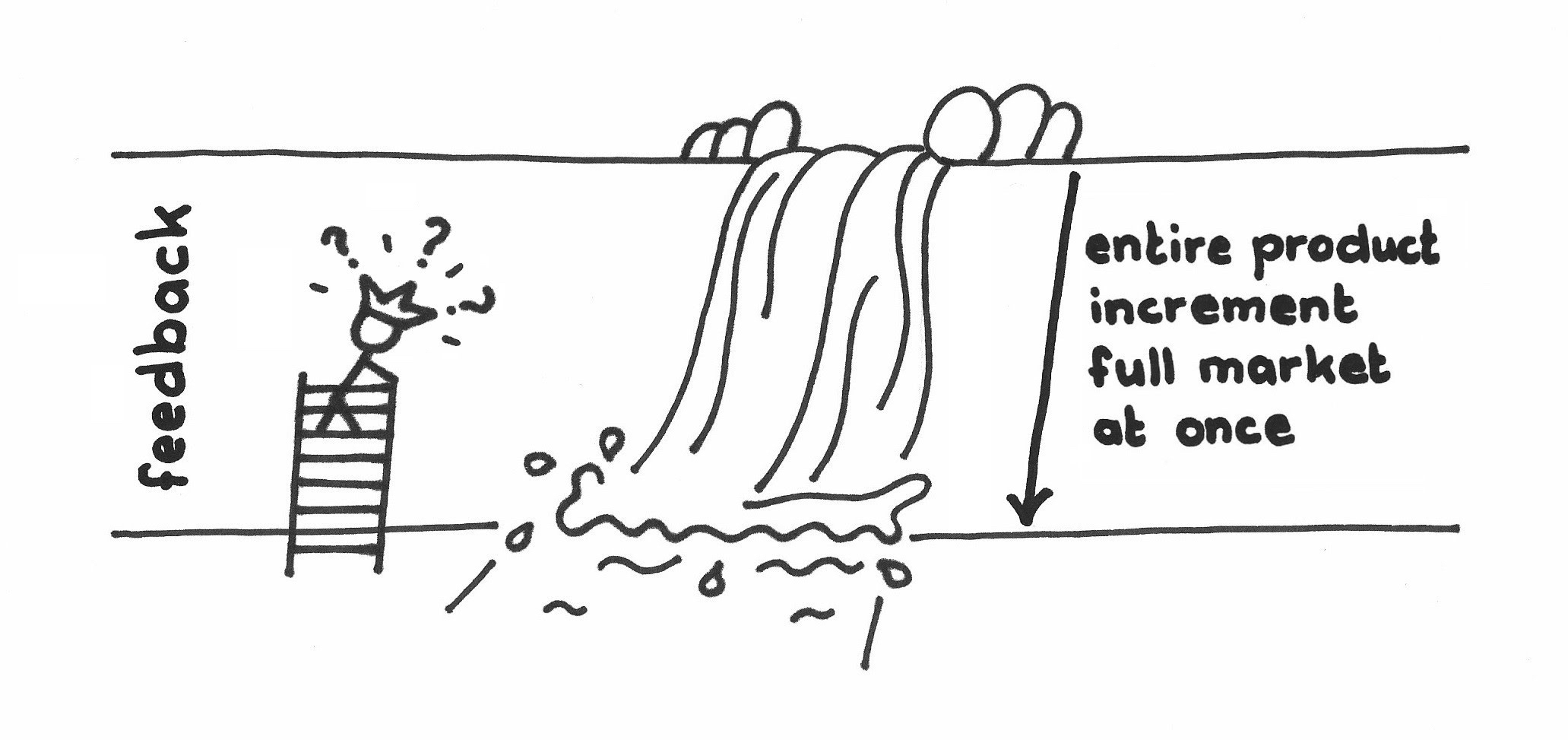

Stakeholder feedback is crucial to agile development and to ongoing improvement and growth of the product, but it may be costly and risky to ship every release to the whole market. Testing and quality gates are one way to generate incremental, internal feedback, but internal evaluations don’t really measure whether the product is generating real value in the environment of its targeted use. Such evaluations also risk creating an inventory of finished goods rather than putting those goods to work in the market.

Though it would be ideal if most products were perfect out of the gate, most complex products improve over time with use and experience. Some features may become useful early in the development life cycle of a product, but some markets may need to aggregate the work of several Sprints together to be able to realize any value from the product.

One can never test enough, but you can’t wait to complete an eternal test suite before shipping. The market will always exercise your product much more than most enterprises can afford to complete in-house. Still, you want to avoid using customers as your primary source of feedback about whether the product does as agreed and expected.

All customers would of course rather have a full product in-hand sooner than later, but premature revisions of the product may still miss conveniences that come with market experience. Though a feature may be on the right track the first time the team pronounces it Done (see Definition of Done), agile tells us that we are unlikely to get it right the first time and that market feedback helps steer the product in the right direction. There is a spectrum of customers—ranging from zero tolerance for such inconveniences to those with a higher appetite for early product availability, even at the cost of some awkwardness.

For example, a vendor may produce a Java compiler (a compiler is a computer tool that takes a programmer’s instructions for how a computer should behave and turns it into a computer program that a third party can use.) The compiler vendor’s client may use that compiler to in turn produce its own product such as a spreadsheet program. Even if the compiler isn’t perfect or complete, it may be good enough for the spreadsheet company to start framing out a new product even before the vendor implements all features of the dark corners of Java. An aerospace manufacturer that uses Java to update its flight-control software in the field, on the other hand, will insist on a fully featured, high-quality Java compiler out-of-the-box from the vendor.

You could just delay releasing product for several Sprints with the hope that the quality will be good enough at the time you release it. But fitness for use in the real world is unlikely to improve without real feedback, and you won’t really know when the quality reaches a given threshold of acceptability unless it’s in real use.

On the other hand, some customers are eager to capitalize on whatever partial benefit they can glean, as early as possible, even though the product isn’t complete, or refined to popular taste, or ready for general use.

Different customers tolerate different rates of change. Customer readiness for a new release may not be bounded by functional product completeness alone, but also by the cost or risk of change. As in the compiler example above, some customers may want to be on the bleeding edge of technology while others want a more shaken-down version that reflects the corrections and removal of defects the vendor has introduced in response to market feedback. Sometimes major product changes require substantial training or adaptation efforts, and different market segments may tolerate such undertakings more often than others.

Therefore:

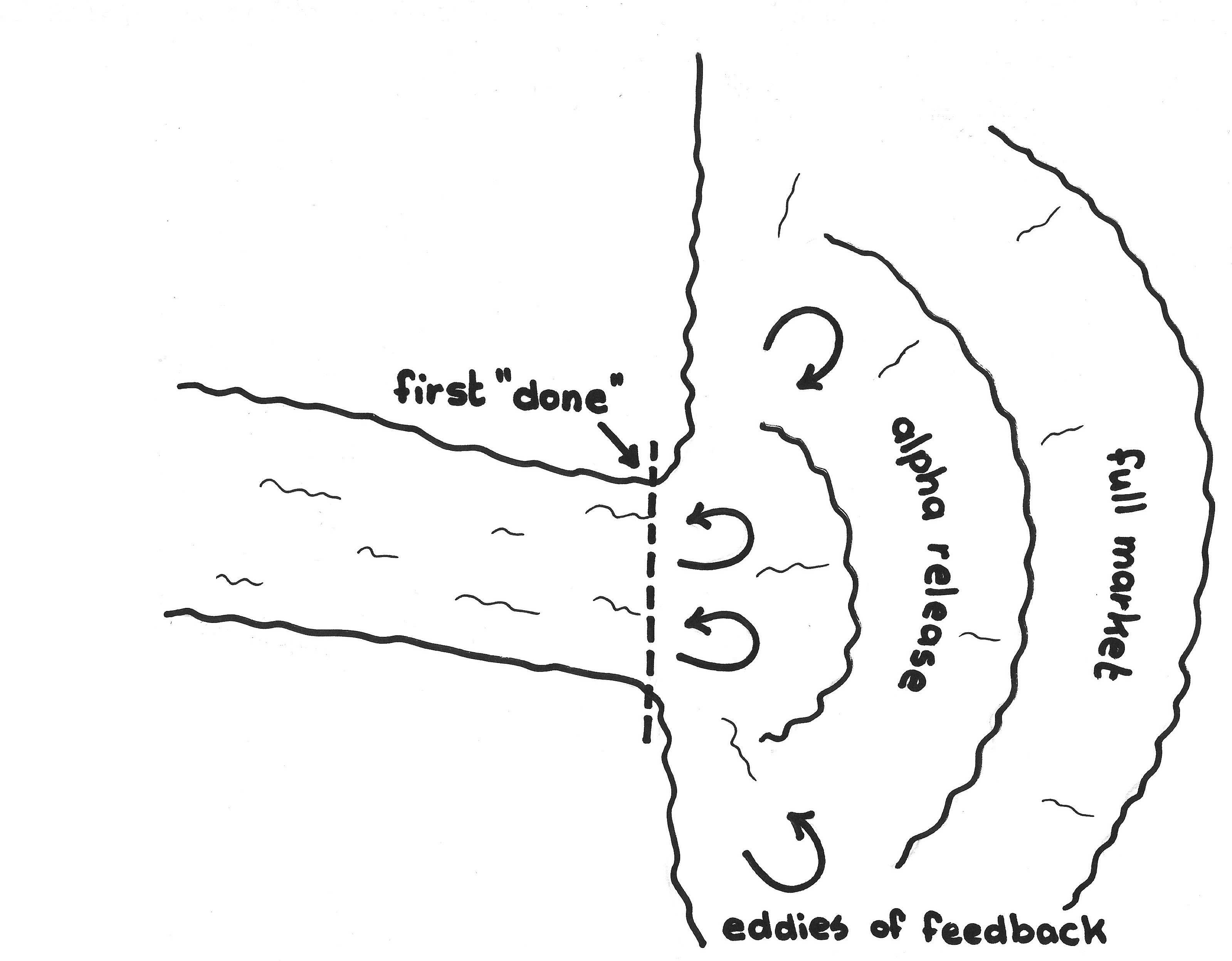

Identify a gradient of markets for releases, ranging from beta testers to full public release, and release every Sprint to some constituency suitable to the product increment maturity and to market conditions.

This does not mean that the teams assembling the product “release” it to the testers. Product release should be downstream from quality assurance efforts in the Value Stream. Though there will still likely be feedback to the Scrum Team from every consumer downstream in the Value Stream, the Scrum Team should maintain a “zero defects” mentality leading up to any release to any downstream stakeholder. Early engagement with (as opposed to release to) downstream stakeholders can help a lot, starting with their input to a Refined Product Backlog and ending with the feedback they provide in Sprint Review and the kind of engagement described in this pattern. The best arrangement is a Development Partnership between the Scrum Team and the client that engenders enough trust so that “release” becomes a low-ceremony formality, instead of a high-ceremony quality gate that triggers some large set of quality-control activities.

✥ ✥ ✥

You can deliver a Regular Product Increment to someone every Sprint to elicit feedback; if not, it’s hard to claim that the team is agile. Perhaps start with a beta release, followed by a release to close partners and eventually to the entire market. “Close partners” might be individual or corporate clients who have a higher risk appetite than the market average, and who can benefit from early and even partial use of your product. In return for that benefit, you request that these partners provide feedback on problems, and you as a vendor can promise to do your best to resolve reported issues using the Responsive Deployment mechanisms already in place. Try to develop a partner relationship with a nearby circle of clients as described in Development Partnership. You can ship less frequently to the general market after feedback solidifies the product, and there may be a small number of intermediary layers between these two extremes. You still get feedback from some constituency every Sprint, but you don’t put primary stakeholders at risk of receiving a product they don’t like.

Over time this strategy helps to provide the Greatest Value to the most stakeholders.

Good Value Streams operate on cadence so that expectations of all stakeholders can align along Sprint boundaries. Run these feedback cycles on a Sprint cadence. On both the possibility and possible futility of continuous deployment, see Responsive Deployment.

The longstanding practice of beta product releases is a common example of this pattern. Facebook always releases first to its in-house employees, who all will be served by the most recent Facebook build when using their own product. Then Facebook releases new capabilities to “the a2 tier” by populating a small subset of their servers so that the new release is exposed to a rather random group of users. The company eventually finishes with a full release ([1]). Skype maintained a similar release structure.

Google maintains beta, Developer and “Canary” deployment channels that trade off risk with new features. The Canary channel “refers to the practice of using canaries in coal mines, so if a change ‘kills’ Chrome Canary, it will be blocked from migrating down to the Developer channel, at least until fixed in a subsequent Canary build.” [2]

Telecommunications vendors usually deploy software provisionally (called “soak”) so they can quickly roll back a deployment in the event that it is faulty. They may also introduce features in relatively low-risk markets prior to full release (e.g., if a new release has many changes to features large businesses commonly use, the telecom may first deploy the new release in a small town with a modest commercial customer base).

There is a risk that this strategy can reduce the attentiveness to kaizen mind (see Kaizen and Kaikaku). The ScrumMaster should guard against this mentality by leading the team to an increasingly aggressive Definition of Done.

[1] Ryan Paul. “Exclusive: a behind-the-scenes look at Facebook release engineering.” Ars Technica, http://arstechnica.com/business/2012/04/exclusive-a-behind-the-scenes-look-at-facebook-release-engineering/3/, 5 April 2012 (accessed 14 January 2017).

[2] —. “Google Chrome.” Wikipedia, https://en.wikipedia.org/wiki/Google_Chrome#Pre-releases, 6 June 2018 (accessed 9 June 2018).

[3] W. Edwards Deming. Out of the Crisis. Boston, MA: MIT Press, 2000, pp. 29-31.

Picture credits: Image Provided by PresenterMedia.com.