...a team has established its initial velocity (see Notes on Velocity). Velocity is likely to change over successive Sprints. It is essential to maintain a reliable Sprint forecast.

✥ ✥ ✥

You want the velocity to reflect the team’s current capacity, but it takes time for the velocity to stabilize after a change.

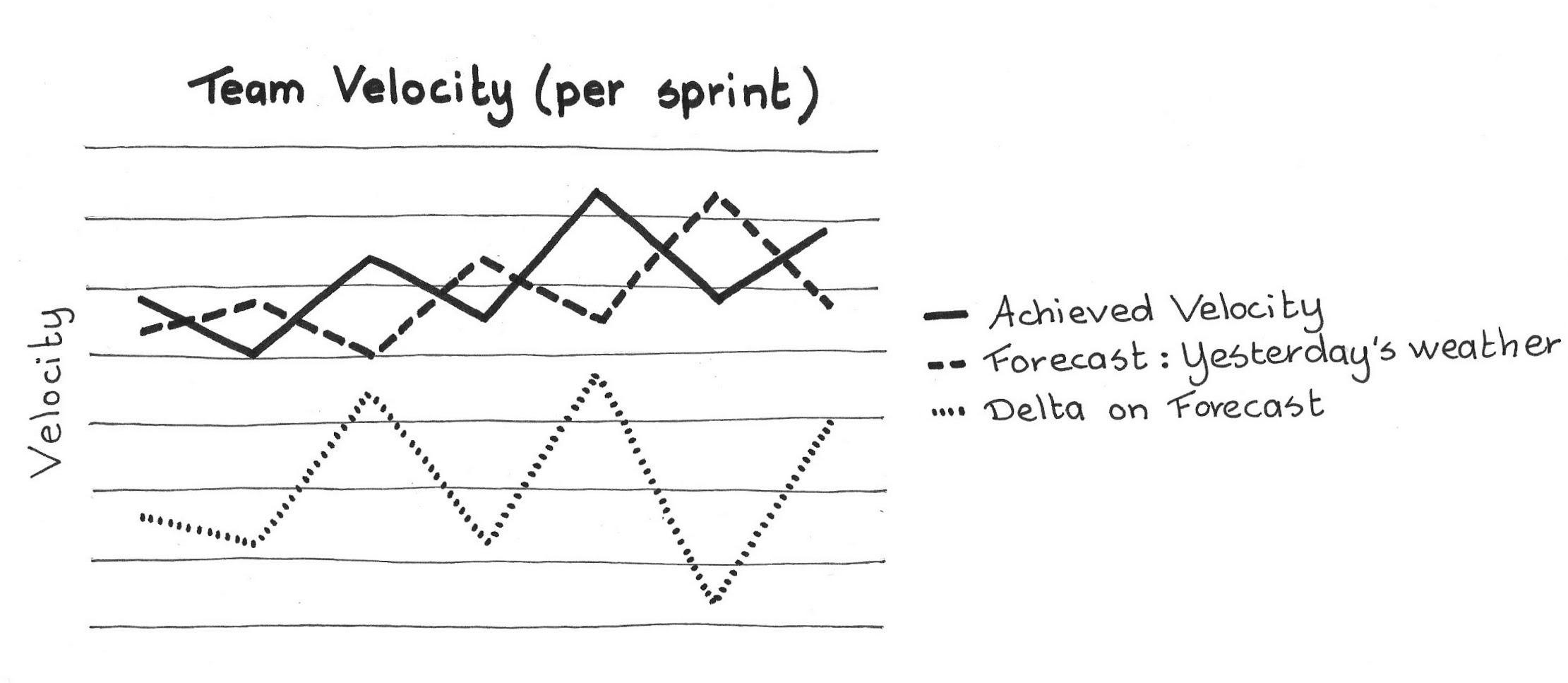

In most cases, a team’s velocity mirrors its past performance (see Yesterday’s Weather). One-time situations may cause a surprisingly large change in velocity. The velocity will probably return to its previous level in the next Sprint.

On the other hand, there is no such thing as a “stable velocity.” There is natural variance in the work, in people’s behavior, and in the requirements for any complex development. If the velocity were the same from Sprint to Sprint we would call it predictable manufacturing. Yesterday’s Weather is a good way to get started.

If the team implemented a process change and the velocity went up, it isn’t immediately clear whether the process change caused the velocity increase. It could be a coincidence. ([1]) So it would be premature to adjust the expected capacity.

On the other hand, the process change may well have caused the velocity to increase. If this is the case, it would be more accurate to increase the expected capacity. Likewise, a dip in velocity may be a one-time event, in which case you might be tempted to ignore it. But a different “one-time event” may actually recur more often, so ignoring these might be fooling yourself. Yet we must not get overly excited about possible catastrophes.

Velocity is a motivating factor for teams—they like to see velocity increase. This can lead to unhealthy behavior: people might overreact when faced with sudden changes in velocity. For example, a sudden drop may provoke panic and emergency measures, while an incidental increase may become the next Sprint’s “required” velocity.

In summary, while an accurate picture of the team’s velocity is a crucial metric for both forecasting and for improvement, many things conspire against applying Yesterday’s Weather too simply.

Therefore:

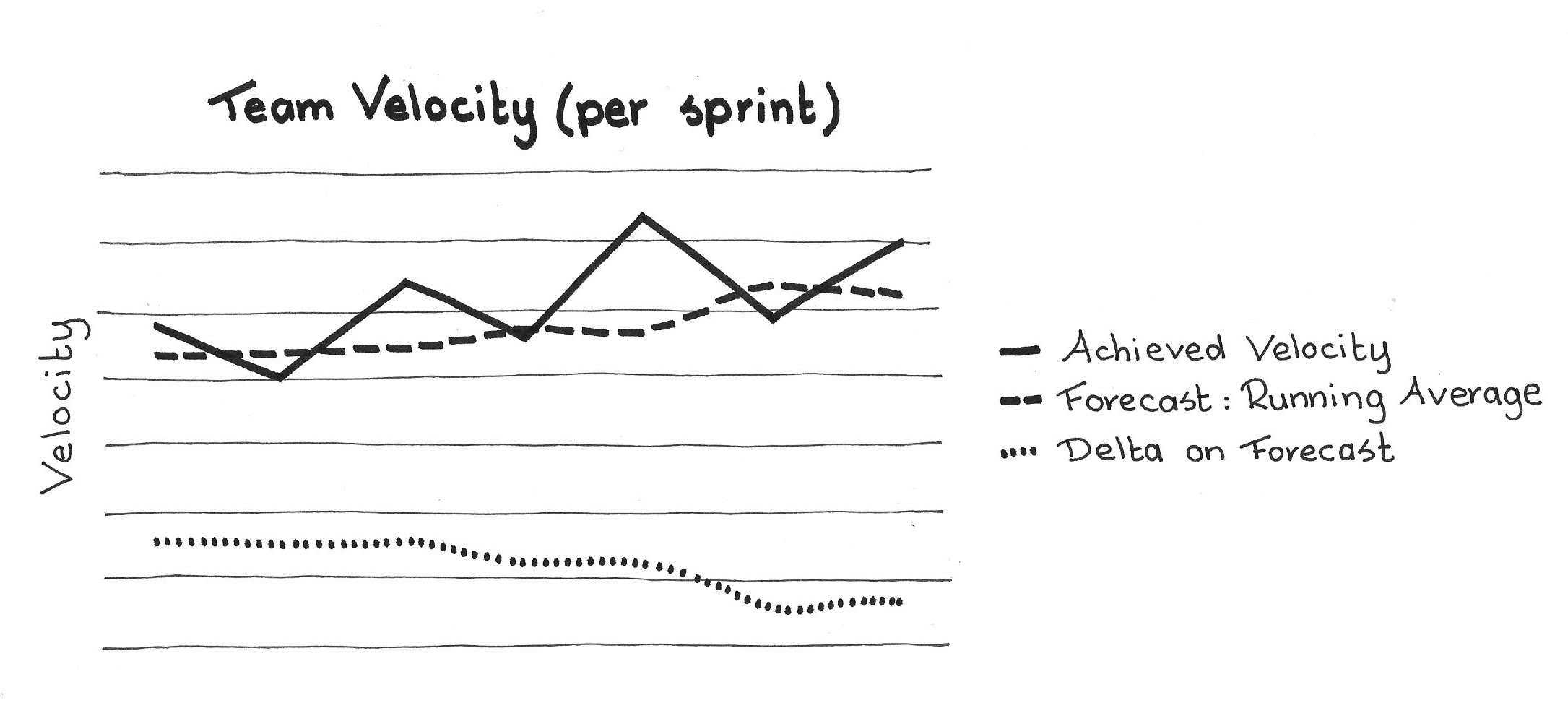

Use a running average of the most recent Sprints to calculate the velocity of the next Sprint.

To get a meaningful running average ([2], [3]), one should use at least three Sprints. Teams will often run three initial Sprints to establish the team’s velocity. Velocity is empirical, you can measure it but not assuredly predict it.

One might also use more than three sprints for the moving average. A larger number decreases the impact of a single anomalous velocity.

In release planning, the best teams apply a range of velocities rather than a single velocity. ([4]) How to use velocity is outside the scope of this pattern; here, we focus only on how to calculate it. But one can easily use a running average together with a rough notion of variance that the team can derive from recent Sprints.

Changing the team composition perturbs its velocity, and you might reset the moving average at this time. However, even if you don’t, the moving average will correct itself over a few Sprints.

✥ ✥ ✥

A good velocity in hand, the Development Team properly can size the Sprint Backlog, and can roughly forecast the content of the next few Sprints.

Velocity measures how much work the team gets Done within a Sprint, therefore the team needs a Definition of Done.

There is a close relationship between velocity and estimation. Using a running average increases the reliability of a team’s estimation of how much work they will complete in a Sprint.

The effect is that any deviation in performance, either improvement or deterioration, has some impact on the velocity. This can encourage teams to improve velocity and to avoid things that reduce velocity.

An alternative approach is simply to keep an average of the velocity of all Sprints, and not bother with a moving average. Compared to a moving average, it dilutes the impact of recent performance, and retains the influence of old velocities from Sprints that may have followed different practices. Because teams should get better over time, you want to weight velocity in favor of recent activity. Therefore, a moving average is more accurate than a cumulative average.

One downside to using a moving average is that an anomalous Sprint has some impact on the velocity for the next several Sprints. If its impact is large, it creates a one-time artificial opposite effect on the velocity when it leaves the moving window.

Note that the team must couple a Running Average Velocity with a culture of sustainable improvement. There is natural pressure from managers and from the team itself to continually improve. Too much pressure can burn out the team, and impel them work long hours and even fake the numbers. Any of these factors can compromise velocity—as well as trust and pride.

This pattern is related to Updated Velocity. This pattern describes the calculation of velocity to smooth out anomalies; Updated Velocity describes when to raise the bar.

[1] Henry A. Landsberger. Hawthorne Revisited: Management and the worker: its critics, and developments in human relations in industry. Ithaca: Cornell University Press, 1958.

[2] Mike Cohn. Agile Estimating and Planning, 1st Edition. Englewood Cliffs, NJ: Prentice Hall, 2005.

[3] Kenneth S. Rubin. Essential Scrum: A Practical Guide to the Most Popular Agile Process. Reading, MA: Addison-Wesley Signature Series (Cohn), Aug. 5, 2012.

[4] Mike Cohn. Succeeding With Agile. Reading, MA: Addison-Wesley, 2009.

Picture credits: Shutterstock.com.